python 爬取商品评价(双十一就要到了)

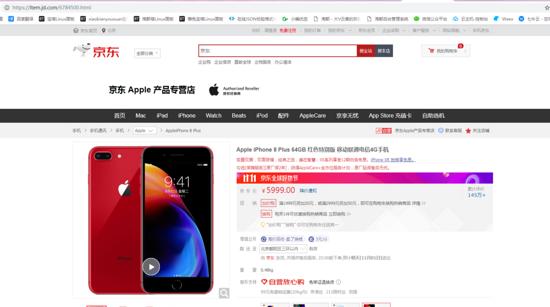

爬一爬京东评论,先放几张图研究看看先。

研究了一下,发现商品的id就是链接.html前面的数字。我们把它复制粘贴下拉

1,对上一篇的代表进行修改和添加

class Spider(): def __init__(self): # score:1为差评;2为中评;3为好评,0为所有评论 # 这链接是京东jsonp回调的数据,我们要给这链接加上商品id和评论页码。 self.start_url = 'https://sclub.jd.com/comment/productPageComments.action?callback=fetchJSON_comment98vv30672&productId={}&score=0&sortType=5&page={}&pageSize=10&isShadowSku=0&rid=0&fold=1' # 添加一个新的队列 self.pageQurl = Queue() # 上一篇是list,现在是dict self.data = dict() 复制代码

2,修改上一篇整个 parse_first函数

def parse_first(self): if not self.qurl.empty(): goodsid = self.qurl.get() url = self.start_url.format(goodsid,1) print('parse_first',url) try: r = requests.get(url, headers={'User-Agent': random.choice(self.user_agent)},proxies=self.notproxy,verify=False) # 编码格式是GBK,不是UTF-8 r.encoding = 'GBK' if r.status_code == 200: # 对回调回来的数据进行处理 res = r.text.replace('fetchJSON_comment98vv30672(', '').replace(');', '').replace('false', '0').replace('true','1') res = json.loads(res) lastPage = int(res['maxPage']) # 爬1-5页评论 for i in range(lastPage)[1:5]: temp = str(goodsid) ',' str(i) self.pageQurl.put(temp) arr = [] for j in res['hotCommentTagStatistics']: arr.append({'name':j['name'],'count':j['count']}) self.data[str(goodsid)] = { 'hotCommentTagStatistics':arr, 'poorCountStr':res['productCommentSummary']['poorCountStr'], 'generalCountStr': res['productCommentSummary']['generalCountStr'], 'goodCountStr': res['productCommentSummary']['goodCountStr'], 'goodRate': res['productCommentSummary']['goodRate'], 'comments': [] } self.parse_first() else: self.first_running = False print('ip被屏蔽') except: self.first_running = False print('代理ip代理失败') else: self.first_running = False 复制代码

3,修改上一篇整个 parse_second函数

def parse_second(self): while self.first_running or not self.pageQurl.empty(): if not self.pageQurl.empty(): arr = self.pageQurl.get().split(',') url = self.start_url.format(arr[0],arr[1]) print(url) try: r = requests.get(url,headers={'User-Agent': random.choice(self.user_agent)},proxies=self.notproxy,verify=False) r.encoding = 'GBK' if r.status_code == 200: res = r.text.replace('fetchJSON_comment98vv30672(', '').replace(');', '').replace('false','0').replace('true', '1') try: res = json.loads(res) for i in res['comments']: images = [] videos = [] # 记录用户的评论图片与视频 if i.get('images'): for j in i['images']: images.append({'imgUrl': j['imgUrl']}) if i.get('videos'): for k in i['videos']: videos.append({'mainUrl': k['mainUrl'], 'remark': k['remark']}) # 记录用户的详细数据 mydict = { 'referenceName': i['referenceName'], 'content': i['content'], 'creationTime': i['creationTime'], 'score': i['score'], 'userImage': i['userImage'], 'nickname': i['nickname'], 'userLevelName': i['userLevelName'], 'productColor': i['productColor'], 'productSize': i['productSize'], 'userClientShow': i['userClientShow'], 'images': images, 'videos': videos } self.data[arr[0]]['comments'].append(mydict) # 线程随机休眠 time.sleep(random.random() * 5) except: print('无法编译成对象',res) except Exception as e: print('获取失败',str(e)) 复制代码

4,修改一部分run函数,

@run_time def run(self): # 爬京东商品的ID,用数组对它们进行存放 goodslist = ['6784500','31426982482','7694047'] for i in goodslist: self.qurl.put(i) ths = [] th1 = Thread(target=self.parse_first, args=()) th1.start() ths.append(th1) for _ in range(self.thread_num): th = Thread(target=self.parse_second) th.start() ths.append(th) for th in ths: # 等待线程终止 th.join() s = json.dumps(self.data, ensure_ascii=False, indent=4) with open('jdComment.json', 'w', encoding='utf-8') as f: f.write(s) print('Data crawling is finished.')

,免责声明:本文仅代表文章作者的个人观点,与本站无关。其原创性、真实性以及文中陈述文字和内容未经本站证实,对本文以及其中全部或者部分内容文字的真实性、完整性和原创性本站不作任何保证或承诺,请读者仅作参考,并自行核实相关内容。文章投诉邮箱:anhduc.ph@yahoo.com