linux换锁教程(深入讲解linuxkermelRCU以及读写锁)

信号量有一个很明显的缺点,没有区分临界区的读写属性,读写锁允许多个线程进程并发的访问临界区,但是写访问只限于一个线程,在多处理器系统中允许多个读者访问共享资源,但是写者有排他性,读写锁的特性如下:允许多个读者同时访问临界区,但是同一时间不能进入;同一时刻只允许一个写者进入临界区;读者和写者不能同时进入临界区。读写锁也有关闭中断和下半部的版本。

RCU:read-copy-update 。。。。。。。。。。。。。。。。。。。。

问题:rcu相比读写锁,解决了什么问题? rcu的基本原理?1、由于内核中spinlock mutex 等都使用了原子操作指令,即原子的访问内存,但是当多CPU 竞争访问临界区时会让cpu的cache命中率下降,性能下降。同时读写锁有个缺陷,读者和写者不能同时存在。

rcu实现的目标就是要解决这个问题,为了使线程同步开销小。不需要原子操作以及内存屏障而访问数据,把同步的问题交给写者线程,写者线程等待所有的读者线程完成后才会吧旧数据销毁。当有多个写者线程存在时,需要额外的保护机制。

原理RCU原理:简单理解为 记录了所有指向共享数据的指针使用者,当要修改共享数据时,先创建一个副本,在副本中修改。所有读者离开临界区后,指针指向新的修改副本后的地方,并且删除旧数据。

官方描述:RCU实际上是一种改进的rwlock,读者几乎没有什么同步开销,它不需要锁,不使用原子指令,因此不会导致锁竞争,内存延迟以及流水线停滞。不需要锁也使得使用更容易,因为死锁问题就不需要考虑了。

- 写者的同步开销比较大,它需要延迟数据结构的释放,复制被修改的数据结构,它也必须使用某种锁机制同步并行的其它写者的修改操作。

- 读者必须提供一个信号给写者以便写者能够确定数据可以被安全地释放或修改的时机。

- 有一个专门的垃圾收集器来探测读者的信号,一旦所有的读者都已经发送信号告知它们都不在使用被RCU保护的数据结构,垃圾收集器就调用回调函数完成最后的数据释放或修改操作。

目前在内核中链表使用RCU较多。

在经典RCU中,RCU读侧临界部分由rcu_read_lock() 和rcu_read_unlock()界定,它们可以嵌套。

对应的同步更新原语为synchronize_rcu(),还有同义的synchronize_net(),等待当前正执行的RCU读侧闻临界部分运行完成。等待的时间称为“宽限期”。

异步更新侧原语call_rcu()在宽限期之后触发指定的函数,如:call_rcu(p,f)调用触发回调函数f(p)。有些情况,如:当卸载使用call_rcu()的模块,必须等待所有RCU回调函数完成,原语rcu_barrier()起该作用。在“RCU BH”列中,rcu_read_lock_bh() 和rcu_read_unlock_bh()界定读侧临界部分,call_rcu_bh()在宽限期后触发指定的函数。注意:RCU BH没有同步接口synchronize_rcu_bh(),如果需要,用户很容易添加同步接口函数。

直接操作指针的原语rcu_assign_pointer()和rcu_dereference()用于创建RCU保护的非链表数据结构,如:数组和树

NOTE:读者 在访问被RCU保护的共享数据期间不能被阻塞,这是RCU机制得以实现的一个基本前提,也就说当读者在引用被RCU保护的共享数据期间,读者所在的CPU不能发生上下文切换,spinlock和rwlock都需要这样的前提。 写者 在访问被RCU保护的共享数据时不需要和读者竞争任何锁,只有在有多于一个写者的情况下需要获得某种锁以与其他写者同步。写者修改数据前首先拷贝一个被修改元素的副本,然后在副本上进行修改,修改完毕后它向垃圾回收器注册一个回调函数以便在适当的时机执行真正的修改操作。等待适当时机的这一时期称为宽限期(grace period),而CPU发生了上下文切换称为经历一个quiescent state,grace period就是所有CPU都经历一次quiescent state所需要的等待的时间。垃圾收集器就是在grace period之后调用写者注册的回调函数来完成真正的数据修改或数据释放操作的。

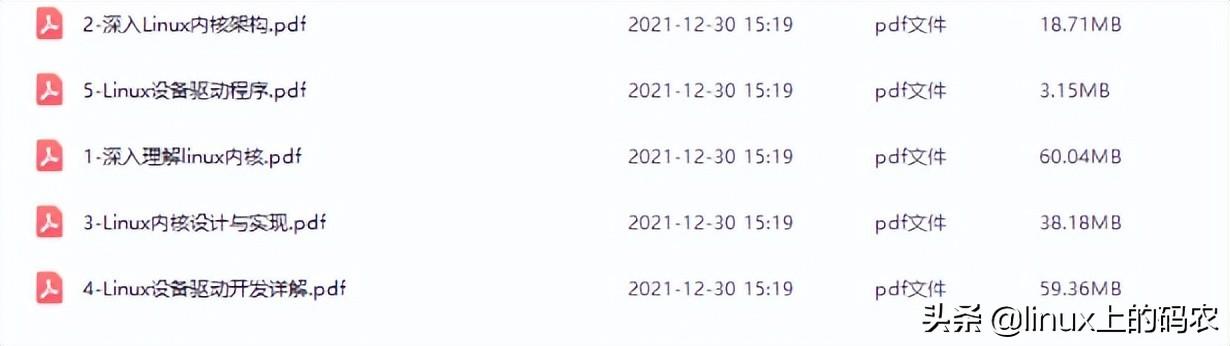

更多linux内核视频教程文档资料免费领取后台私信【内核】自行获取.

/*

Please note that the "What is RCU?" LWN series is an excellent place

to start learning about RCU:

1. What is RCU, Fundamentally? http://lwn.net/Articles/262464/

2. What is RCU? Part 2: Usage http://lwn.net/Articles/263130/

3. RCU part 3: the RCU API http://lwn.net/Articles/264090/

4. The RCU API, 2010 Edition http://lwn.net/Articles/418853/

What is RCU?

RCU is a synchronization mechanism that was added to the Linux kernel

during the 2.5 development effort that is optimized for read-mostly

situations. Although RCU is actually quite simple once you understand it,

getting there can sometimes be a challenge. Part of the problem is that

most of the past descriptions of RCU have been written with the mistaken

assumption that there is "one true way" to describe RCU. Instead,

the experience has been that different people must take different paths

to arrive at an understanding of RCU. This document provides several

different paths, as follows:

1. RCU OVERVIEW

2. WHAT IS RCU'S CORE API?

3. WHAT ARE SOME example USES OF CORE RCU API?

4. WHAT IF MY UPDATING THREAD CANNOT BLOCK?

5. WHAT ARE SOME SIMPLE IMPLEMENTATIONS OF RCU?

6. ANALOGY WITH READER-WRITER LOCKING

7. FULL LIST OF RCU APIs

8. ANSWERS TO QUICK QUIZZES

People who prefer starting with a conceptual overview should focus on

Section 1, though most readers will profit by reading this section at

some point. People who prefer to start with an API that they can then

experiment with should focus on Section 2. People who prefer to start

with example uses should focus on Sections 3 and 4. People who need to

understand the RCU implementation should focus on Section 5, then dive

into the kernel source code. People who reason best by analogy should

focus on Section 6. Section 7 serves as an index to the docbook API

documentation, and Section 8 is the traditional answer key.

So, start with the section that makes the most sense to you and your

preferred method of learning. If you need to know everything about

everything, feel free to read the whole thing -- but if you are really

that type of person, you have perused the source code and will therefore

never need this document anyway. ;-)

1. RCU OVERVIEW

The basic idea behind RCU is to split updates into "removal" and

"reclamation" phases. The removal phase removes references to data items

within a data structure (possibly by replacing them with references to

new versions of these data items), and can run concurrently with readers.

The reason that it is safe to run the removal phase concurrently with

readers is the semantics of modern CPUs guarantee that readers will see

either the old or the new version of the data structure rather than a

partially updated reference. The reclamation phase does the work of reclaiming

(e.g., freeing) the data items removed from the data structure during the

removal phase. Because reclaiming data items can disrupt any readers

concurrently referencing those data items, the reclamation phase must

not start until readers no longer hold references to those data items.

Splitting the update into removal and reclamation phases permits the

updater to perform the removal phase immediately, and to defer the

reclamation phase until all readers active during the removal phase have

completed, either by blocking until they finish or by registering a

callback that is invoked after they finish. Only readers that are active

during the removal phase need be considered, because any reader starting

after the removal phase will be unable to gain a reference to the removed

data items, and therefore cannot be disrupted by the reclamation phase.

So the typical RCU update sequence goes something like the following:

a. Remove pointers to a data structure, so that subsequent

readers cannot gain a reference to it.

b. Wait for all previous readers to complete their RCU read-side

critical sections.

c. At this point, there cannot be any readers who hold references

to the data structure, so it now may safely be reclaimed

(e.g., kfree()d).

Step (b) above is the key idea underlying RCU's deferred destruction.

The ability to wait until all readers are done allows RCU readers to

use much lighter-weight synchronization, in some cases, absolutely no

synchronization at all. In contrast, in more conventional lock-based

schemes, readers must use heavy-weight synchronization in order to

prevent an updater from deleting the data structure out from under them.

This is because lock-based updaters typically update data items in place,

and must therefore exclude readers. In contrast, RCU-based updaters

typically take advantage of the fact that writes to single aligned

pointers are atomic on modern CPUs, allowing atomic insertion, removal,

and replacement of data items in a linked structure without disrupting

readers. Concurrent RCU readers can then continue accessing the old

versions, and can dispense with the atomic operations, memory barriers,

and communications cache misses that are so expensive on present-day

SMP computer systems, even in absence of lock contention.

In the three-step procedure shown above, the updater is performing both

the removal and the reclamation step, but it is often helpful for an

entirely different thread to do the reclamation, as is in fact the case

in the Linux kernel's directory-entry cache (dcache). Even if the same

thread performs both the update step (step (a) above) and the reclamation

step (step (c) above), it is often helpful to think of them separately.

For example, RCU readers and updaters need not communicate at all,

but RCU provides implicit low-overhead communication between readers

and reclaimers, namely, in step (b) above.

So how the heck can a reclaimer tell when a reader is done, given

that readers are not doing any sort of synchronization operations???

Read on to learn about how RCU's API makes this easy.

2. WHAT IS RCU'S CORE API?

The core RCU API is quite small:

a. rcu_read_lock()

b. rcu_read_unlock()

c. synchronize_rcu() / call_rcu()

d. rcu_assign_pointer()

e. rcu_dereference()

There are many other members of the RCU API, but the rest can be

expressed in terms of these five, though most implementations instead

express synchronize_rcu() in terms of the call_rcu() callback API.

The five core RCU APIs are described below, the other 18 will be enumerated

later. See the kernel docbook documentation for more info, or look directly

at the function header comments.

rcu_read_lock()

void rcu_read_lock(void);

Used by a reader to inform the reclaimer that the reader is

entering an RCU read-side critical section. It is illegal

to block while in an RCU read-side critical section, though

kernels built with CONFIG_PREEMPT_RCU can preempt RCU

read-side critical sections. Any RCU-protected data structure

accessed during an RCU read-side critical section is guaranteed to

remain unreclaimed for the full duration of that critical section.

Reference counts may be used in conjunction with RCU to maintain

longer-term references to data structures.

rcu_read_unlock()

void rcu_read_unlock(void);

Used by a reader to inform the reclaimer that the reader is

exiting an RCU read-side critical section. Note that RCU

read-side critical sections may be nested and/or overlapping.

synchronize_rcu()

void synchronize_rcu(void);

Marks the end of updater code and the beginning of reclaimer

code. It does this by blocking until all pre-existing RCU

read-side critical sections on all CPUs have completed.

Note that synchronize_rcu() will -not- necessarily wait for

any subsequent RCU read-side critical sections to complete.

For example, consider the following sequence of events:

CPU 0 CPU 1 CPU 2

----------------- ------------------------- ---------------

1. rcu_read_lock()

2. enters synchronize_rcu()

3. rcu_read_lock()

4. rcu_read_unlock()

5. exits synchronize_rcu()

6. rcu_read_unlock()

To reiterate, synchronize_rcu() waits only for ongoing RCU

read-side critical sections to complete, not necessarily for

any that begin after synchronize_rcu() is invoked.

Of course, synchronize_rcu() does not necessarily return

-immediately- after the last pre-existing RCU read-side critical

section completes. For one thing, there might well be scheduling

delays. For another thing, many RCU implementations process

requests in batches in order to improve efficiencies, which can

further delay synchronize_rcu().

Since synchronize_rcu() is the API that must figure out when

readers are done, its implementation is key to RCU. For RCU

to be useful in all but the most read-intensive situations,

synchronize_rcu()'s overhead must also be quite small.

The call_rcu() API is a callback form of synchronize_rcu(),

and is described in more detail in a later section. Instead of

blocking, it registers a function and argument which are invoked

after all ongoing RCU read-side critical sections have completed.

This callback variant is particularly useful in situations where

it is illegal to block or where update-side performance is

critically important.

However, the call_rcu() API should not be used lightly, as use

of the synchronize_rcu() API generally results in simpler code.

In addition, the synchronize_rcu() API has the nice property

of automatically limiting update rate should grace periods

be delayed. This property results in system resilience in face

of denial-of-service attacks. Code using call_rcu() should limit

update rate in order to gain this same sort of resilience. See

checklist.txt for some approaches to limiting the update rate.

rcu_assign_pointer()

typeof(p) rcu_assign_pointer(p, typeof(p) v);

Yes, rcu_assign_pointer() -is- implemented as a macro, though it

would be cool to be able to declare a function in this manner.

(Compiler experts will no doubt disagree.)

The updater uses this function to assign a new value to an

RCU-protected pointer, in order to safely communicate the change

in value from the updater to the reader. This function returns

the new value, and also executes any memory-barrier instructions

required for a given CPU architecture.

Perhaps just as important, it serves to document (1) which

pointers are protected by RCU and (2) the point at which a

given structure becomes accessible to other CPUs. That said,

rcu_assign_pointer() is most frequently used indirectly, via

the _rcu list-manipulation primitives such as list_add_rcu().

rcu_dereference()

typeof(p) rcu_dereference(p);

Like rcu_assign_pointer(), rcu_dereference() must be implemented

as a macro.

The reader uses rcu_dereference() to fetch an RCU-protected

pointer, which returns a value that may then be safely

dereferenced. Note that rcu_deference() does not actually

dereference the pointer, instead, it protects the pointer for

later dereferencing. It also executes any needed memory-barrier

instructions for a given CPU architecture. Currently, only Alpha

needs memory barriers within rcu_dereference() -- on other CPUs,

it compiles to nothing, not even a compiler directive.

Common coding practice uses rcu_dereference() to copy an

RCU-protected pointer to a local variable, then dereferences

this local variable, for example as follows:

p = rcu_dereference(head.next);

return p->data;

However, in this case, one could just as easily combine these

into one statement:

return rcu_dereference(head.next)->data;

If you are going to be fetching multiple fields from the

RCU-protected structure, using the local variable is of

course preferred. Repeated rcu_dereference() calls look

ugly, do not guarantee that the same pointer will be returned

if an update happened while in the critical section, and incur

unnecessary overhead on Alpha CPUs.

Note that the value returned by rcu_dereference() is valid

only within the enclosing RCU read-side critical section.

For example, the following is -not- legal:

rcu_read_lock();

p = rcu_dereference(head.next);

rcu_read_unlock();

x = p->address; /* BUG!!! */

rcu_read_lock();

y = p->data; /* BUG!!! */

rcu_read_unlock();

Holding a reference from one RCU read-side critical section

to another is just as illegal as holding a reference from

one lock-based critical section to another! Similarly,

using a reference outside of the critical section in which

it was acquired is just as illegal as doing so with normal

locking.

As with rcu_assign_pointer(), an important function of

rcu_dereference() is to document which pointers are protected by

RCU, in particular, flagging a pointer that is subject to changing

at any time, including immediately after the rcu_dereference().

And, again like rcu_assign_pointer(), rcu_dereference() is

typically used indirectly, via the _rcu list-manipulation

primitives, such as list_for_each_entry_rcu().

The following diagram shows how each API communicates among the

reader, updater, and reclaimer.

rcu_assign_pointer()

--------

---------------------->| reader |---------

| -------- |

| | |

| | | Protect:

| | | rcu_read_lock()

| | | rcu_read_unlock()

| rcu_dereference() | |

--------- | |

| updater |<--------------------- |

--------- V

| -----------

----------------------------------->| reclaimer |

-----------

Defer:

synchronize_rcu() & call_rcu()

The RCU infrastructure observes the time sequence of rcu_read_lock(),

rcu_read_unlock(), synchronize_rcu(), and call_rcu() invocations in

order to determine when (1) synchronize_rcu() invocations may return

to their callers and (2) call_rcu() callbacks may be invoked. Efficient

implementations of the RCU infrastructure make heavy use of batching in

order to amortize their overhead over many uses of the corresponding APIs.

There are no fewer than three RCU mechanisms in the Linux kernel; the

diagram above shows the first one, which is by far the most commonly used.

The rcu_dereference() and rcu_assign_pointer() primitives are used for

all three mechanisms, but different defer and protect primitives are

used as follows:

Defer Protect

a. synchronize_rcu() rcu_read_lock() / rcu_read_unlock()

call_rcu() rcu_dereference()

b. synchronize_rcu_bh() rcu_read_lock_bh() / rcu_read_unlock_bh()

call_rcu_bh() rcu_dereference_bh()

c. synchronize_sched() rcu_read_lock_sched() / rcu_read_unlock_sched()

call_rcu_sched() preempt_disable() / preempt_enable()

local_irq_save() / local_irq_restore()

hardirq enter / hardirq exit

NMI enter / NMI exit

rcu_dereference_sched()

These three mechanisms are used as follows:

a. RCU applied to normal data structures.

b. RCU applied to networking data structures that may be subjected

to remote denial-of-service attacks.

c. RCU applied to scheduler and interrupt/NMI-handler tasks.

Again, most uses will be of (a). The (b) and (c) cases are important

for specialized uses, but are relatively uncommon.

3. WHAT ARE SOME EXAMPLE USES OF CORE RCU API?

This section shows a simple use of the core RCU API to protect a

global pointer to a dynamically allocated structure. More-typical

uses of RCU may be found in listRCU.txt, arrayRCU.txt, and NMI-RCU.txt.

struct foo {

int a;

char b;

long c;

};

DEFINE_SPINLOCK(foo_mutex);

struct foo __rcu *gbl_foo;

/*

* Create a new struct foo that is the same as the one currently

* pointed to by gbl_foo, except that field "a" is replaced

* with "new_a". Points gbl_foo to the new structure, and

* frees up the old structure after a grace period.

*

* Uses rcu_assign_pointer() to ensure that concurrent readers

* see the initialized version of the new structure.

*

* Uses synchronize_rcu() to ensure that any readers that might

* have references to the old structure complete before freeing

* the old structure.

*/

void foo_update_a(int new_a)

{

struct foo *new_fp;

struct foo *old_fp;

new_fp = kmalloc(sizeof(*new_fp), GFP_KERNEL);

spin_lock(&foo_mutex);

old_fp = rcu_dereference_protected(gbl_foo, lockdep_is_held(&foo_mutex));

*new_fp = *old_fp;

new_fp->a = new_a;

rcu_assign_pointer(gbl_foo, new_fp);

spin_unlock(&foo_mutex);

synchronize_rcu();

kfree(old_fp);

}

/*

* Return the value of field "a" of the current gbl_foo

* structure. Use rcu_read_lock() and rcu_read_unlock()

* to ensure that the structure does not get deleted out

* from under us, and use rcu_dereference() to ensure that

* we see the initialized version of the structure (important

* for DEC Alpha and for people reading the code).

*/

int foo_get_a(void)

{

int retval;

rcu_read_lock();

retval = rcu_dereference(gbl_foo)->a;

rcu_read_unlock();

return retval;

}

So, to sum up:

o Use rcu_read_lock() and rcu_read_unlock() to guard RCU

read-side critical sections.

o Within an RCU read-side critical section, use rcu_dereference()

to dereference RCU-protected pointers.

o Use some solid scheme (such as locks or semaphores) to

keep concurrent updates from interfering with each other.

o Use rcu_assign_pointer() to update an RCU-protected pointer.

This primitive protects concurrent readers from the updater,

-not- concurrent updates from each other! You therefore still

need to use locking (or something similar) to keep concurrent

rcu_assign_pointer() primitives from interfering with each other.

o Use synchronize_rcu() -after- removing a data element from an

RCU-protected data structure, but -before- reclaiming/freeing

the data element, in order to wait for the completion of all

RCU read-side critical sections that might be referencing that

data item.

See checklist.txt for additional rules to follow when using RCU.

And again, more-typical uses of RCU may be found in listRCU.txt,

arrayRCU.txt, and NMI-RCU.txt.

4. WHAT IF MY UPDATING THREAD CANNOT BLOCK?

In the example above, foo_update_a() blocks until a grace period elapses.

This is quite simple, but in some cases one cannot afford to wait so

long -- there might be other high-priority work to be done.

In such cases, one uses call_rcu() rather than synchronize_rcu().

The call_rcu() API is as follows:

void call_rcu(struct rcu_head * head,

void (*func)(struct rcu_head *head));

This function invokes func(head) after a grace period has elapsed.

This invocation might happen from either softirq or process context,

so the function is not permitted to block. The foo struct needs to

have an rcu_head structure added, perhaps as follows:

struct foo {

int a;

char b;

long c;

struct rcu_head rcu;

};

The foo_update_a() function might then be written as follows:

/*

* Create a new struct foo that is the same as the one currently

* pointed to by gbl_foo, except that field "a" is replaced

* with "new_a". Points gbl_foo to the new structure, and

* frees up the old structure after a grace period.

*

* Uses rcu_assign_pointer() to ensure that concurrent readers

* see the initialized version of the new structure.

*

* Uses call_rcu() to ensure that any readers that might have

* references to the old structure complete before freeing the

* old structure.

*/

void foo_update_a(int new_a)

{

struct foo *new_fp;

struct foo *old_fp;

new_fp = kmalloc(sizeof(*new_fp), GFP_KERNEL);

spin_lock(&foo_mutex);

old_fp = rcu_dereference_protected(gbl_foo, lockdep_is_held(&foo_mutex));

*new_fp = *old_fp;

new_fp->a = new_a;

rcu_assign_pointer(gbl_foo, new_fp);

spin_unlock(&foo_mutex);

call_rcu(&old_fp->rcu, foo_reclaim);

}

The foo_reclaim() function might appear as follows:

void foo_reclaim(struct rcu_head *rp)

{

struct foo *fp = container_of(rp, struct foo, rcu);

foo_cleanup(fp->a);

kfree(fp);

}

The container_of() primitive is a macro that, given a pointer into a

struct, the type of the struct, and the pointed-to field within the

struct, returns a pointer to the beginning of the struct.

The use of call_rcu() permits the caller of foo_update_a() to

immediately regain control, without needing to worry further about the

old version of the newly updated element. It also clearly shows the

RCU distinction between updater, namely foo_update_a(), and reclaimer,

namely foo_reclaim().

The summary of advice is the same as for the previous section, except

that we are now using call_rcu() rather than synchronize_rcu():

o Use call_rcu() -after- removing a data element from an

RCU-protected data structure in order to register a callback

function that will be invoked after the completion of all RCU

read-side critical sections that might be referencing that

data item.

If the callback for call_rcu() is not doing anything more than calling

kfree() on the structure, you can use kfree_rcu() instead of call_rcu()

to avoid having to write your own callback:

kfree_rcu(old_fp, rcu);

Again, see checklist.txt for additional rules governing the use of RCU.

5. WHAT ARE SOME SIMPLE IMPLEMENTATIONS OF RCU?

One of the nice things about RCU is that it has extremely simple "toy"

implementations that are a good first step towards understanding the

production-quality implementations in the Linux kernel. This section

presents two such "toy" implementations of RCU, one that is implemented

in terms of familiar locking primitives, and another that more closely

resembles "classic" RCU. Both are way too simple for real-world use,

lacking both functionality and performance. However, they are useful

in getting a feel for how RCU works. See kernel/rcupdate.c for a

production-quality implementation, and see:

http://www.rdrop.com/users/paulmck/RCU

for papers describing the Linux kernel RCU implementation. The OLS'01

and OLS'02 papers are a good introduction, and the dissertation provides

more details on the current implementation as of early 2004.

5A. "TOY" IMPLEMENTATION #1: LOCKING

This section presents a "toy" RCU implementation that is based on

familiar locking primitives. Its overhead makes it a non-starter for

real-life use, as does its lack of scalability. It is also unsuitable

for realtime use, since it allows scheduling latency to "bleed" from

one read-side critical section to another.

However, it is probably the easiest implementation to relate to, so is

a good starting point.

It is extremely simple:

static DEFINE_RWLOCK(rcu_gp_mutex);

void rcu_read_lock(void)

{

read_lock(&rcu_gp_mutex);

}

void rcu_read_unlock(void)

{

read_unlock(&rcu_gp_mutex);

}

void synchronize_rcu(void)

{

write_lock(&rcu_gp_mutex);

write_unlock(&rcu_gp_mutex);

}

[You can ignore rcu_assign_pointer() and rcu_dereference() without

missing much. But here they are anyway. And whatever you do, don't

forget about them when submitting patches making use of RCU!]

#define rcu_assign_pointer(p, v) ({ \

smp_wmb(); \

(p) = (v); \

})

#define rcu_dereference(p) ({ \

typeof(p) _________p1 = p; \

smp_read_barrier_depends(); \

(_________p1); \

})

The rcu_read_lock() and rcu_read_unlock() primitive read-acquire

and release a global reader-writer lock. The synchronize_rcu()

primitive write-acquires this same lock, then immediately releases

it. This means that once synchronize_rcu() exits, all RCU read-side

critical sections that were in progress before synchronize_rcu() was

called are guaranteed to have completed -- there is no way that

synchronize_rcu() would have been able to write-acquire the lock

otherwise.

It is possible to nest rcu_read_lock(), since reader-writer locks may

be recursively acquired. Note also that rcu_read_lock() is immune

from deadlock (an important property of RCU). The reason for this is

that the only thing that can block rcu_read_lock() is a synchronize_rcu().

But synchronize_rcu() does not acquire any locks while holding rcu_gp_mutex,

so there can be no deadlock cycle.

Quick Quiz #1: Why is this argument naive? How could a deadlock

occur when using this algorithm in a real-world Linux

kernel? How could this deadlock be avoided?

5B. "TOY" EXAMPLE #2: CLASSIC RCU

This section presents a "toy" RCU implementation that is based on

"classic RCU". It is also short on performance (but only for updates) and

on features such as hotplug CPU and the ability to run in CONFIG_PREEMPT

kernels. The definitions of rcu_dereference() and rcu_assign_pointer()

are the same as those shown in the preceding section, so they are omitted.

void rcu_read_lock(void) { }

void rcu_read_unlock(void) { }

void synchronize_rcu(void)

{

int cpu;

for_each_possible_cpu(cpu)

run_on(cpu);

}

Note that rcu_read_lock() and rcu_read_unlock() do absolutely nothing.

This is the great strength of classic RCU in a non-preemptive kernel:

read-side overhead is precisely zero, at least on non-Alpha CPUs.

And there is absolutely no way that rcu_read_lock() can possibly

participate in a deadlock cycle!

The implementation of synchronize_rcu() simply schedules itself on each

CPU in turn. The run_on() primitive can be implemented straightforwardly

in terms of the sched_setaffinity() primitive. Of course, a somewhat less

"toy" implementation would restore the affinity upon completion rather

than just leaving all tasks running on the last CPU, but when I said

"toy", I meant -toy-!

So how the heck is this supposed to work???

Remember that it is illegal to block while in an RCU read-side critical

section. Therefore, if a given CPU executes a context switch, we know

that it must have completed all preceding RCU read-side critical sections.

Once -all- CPUs have executed a context switch, then -all- preceding

RCU read-side critical sections will have completed.

So, suppose that we remove a data item from its structure and then invoke

synchronize_rcu(). Once synchronize_rcu() returns, we are guaranteed

that there are no RCU read-side critical sections holding a reference

to that data item, so we can safely reclaim it.

Quick Quiz #2: Give an example where Classic RCU's read-side

overhead is -negative-.

Quick Quiz #3: If it is illegal to block in an RCU read-side

critical section, what the heck do you do in

PREEMPT_RT, where normal spinlocks can block???

6. ANALOGY WITH READER-WRITER LOCKING

Although RCU can be used in many different ways, a very common use of

RCU is analogous to reader-writer locking. The following unified

diff shows how closely related RCU and reader-writer locking can be.

@@ -13,15 14,15 @@

struct list_head *lp;

struct el *p;

- read_lock();

- list_for_each_entry(p, head, lp) {

rcu_read_lock();

list_for_each_entry_rcu(p, head, lp) {

if (p->key == key) {

*result = p->data;

- read_unlock();

rcu_read_unlock();

return 1;

}

}

- read_unlock();

rcu_read_unlock();

return 0;

}

@@ -29,15 30,16 @@

{

struct el *p;

- write_lock(&listmutex);

spin_lock(&listmutex);

list_for_each_entry(p, head, lp) {

if (p->key == key) {

- list_del(&p->list);

- write_unlock(&listmutex);

list_del_rcu(&p->list);

spin_unlock(&listmutex);

synchronize_rcu();

kfree(p);

return 1;

}

}

- write_unlock(&listmutex);

spin_unlock(&listmutex);

return 0;

}

Or, for those who prefer a side-by-side listing:

1 struct el { 1 struct el {

2 struct list_head list; 2 struct list_head list;

3 long key; 3 long key;

4 spinlock_t mutex; 4 spinlock_t mutex;

5 int data; 5 int data;

6 /* Other data fields */ 6 /* Other data fields */

7 }; 7 };

8 spinlock_t listmutex; 8 spinlock_t listmutex;

9 struct el head; 9 struct el head;

1 int search(long key, int *result) 1 int search(long key, int *result)

2 { 2 {

3 struct list_head *lp; 3 struct list_head *lp;

4 struct el *p; 4 struct el *p;

5 5

6 read_lock(); 6 rcu_read_lock();

7 list_for_each_entry(p, head, lp) { 7 list_for_each_entry_rcu(p, head, lp) {

8 if (p->key == key) { 8 if (p->key == key) {

9 *result = p->data; 9 *result = p->data;

10 read_unlock(); 10 rcu_read_unlock();

11 return 1; 11 return 1;

12 } 12 }

13 } 13 }

14 read_unlock(); 14 rcu_read_unlock();

15 return 0; 15 return 0;

16 } 16 }

1 int delete(long key) 1 int delete(long key)

2 { 2 {

3 struct el *p; 3 struct el *p;

4 4

5 write_lock(&listmutex); 5 spin_lock(&listmutex);

6 list_for_each_entry(p, head, lp) { 6 list_for_each_entry(p, head, lp) {

7 if (p->key == key) { 7 if (p->key == key) {

8 list_del(&p->list); 8 list_del_rcu(&p->list);

9 write_unlock(&listmutex); 9 spin_unlock(&listmutex);

10 synchronize_rcu();

10 kfree(p); 11 kfree(p);

11 return 1; 12 return 1;

12 } 13 }

13 } 14 }

14 write_unlock(&listmutex); 15 spin_unlock(&listmutex);

15 return 0; 16 return 0;

16 } 17 }

Either way, the differences are quite small. Read-side locking moves

to rcu_read_lock() and rcu_read_unlock, update-side locking moves from

a reader-writer lock to a simple spinlock, and a synchronize_rcu()

precedes the kfree().

However, there is one potential catch: the read-side and update-side

critical sections can now run concurrently. In many cases, this will

not be a problem, but it is necessary to check carefully regardless.

For example, if multiple independent list updates must be seen as

a single atomic update, converting to RCU will require special care.

Also, the presence of synchronize_rcu() means that the RCU version of

delete() can now block. If this is a problem, there is a callback-based

mechanism that never blocks, namely call_rcu() or kfree_rcu(), that can

be used in place of synchronize_rcu().

7. FULL LIST OF RCU APIs

The RCU APIs are documented in docbook-format header comments in the

Linux-kernel source code, but it helps to have a full list of the

APIs, since there does not appear to be a way to categorize them

in docbook. Here is the list, by category.

RCU list traversal:

list_entry_rcu

list_first_entry_rcu

list_next_rcu

list_for_each_entry_rcu

list_for_each_entry_continue_rcu

hlist_first_rcu

hlist_next_rcu

hlist_pprev_rcu

hlist_for_each_entry_rcu

hlist_for_each_entry_rcu_bh

hlist_for_each_entry_continue_rcu

hlist_for_each_entry_continue_rcu_bh

hlist_nulls_first_rcu

hlist_nulls_for_each_entry_rcu

hlist_bl_first_rcu

hlist_bl_for_each_entry_rcu

RCU pointer/list update:

rcu_assign_pointer

list_add_rcu

list_add_tail_rcu

list_del_rcu

list_replace_rcu

hlist_add_behind_rcu

hlist_add_before_rcu

hlist_add_head_rcu

hlist_del_rcu

hlist_del_init_rcu

hlist_replace_rcu

list_splice_init_rcu()

hlist_nulls_del_init_rcu

hlist_nulls_del_rcu

hlist_nulls_add_head_rcu

hlist_bl_add_head_rcu

hlist_bl_del_init_rcu

hlist_bl_del_rcu

hlist_bl_set_first_rcu

RCU: Critical sections Grace period Barrier

rcu_read_lock synchronize_net rcu_barrier

rcu_read_unlock synchronize_rcu

rcu_dereference synchronize_rcu_expedited

rcu_read_lock_held call_rcu

rcu_dereference_check kfree_rcu

rcu_dereference_protected

bh: Critical sections Grace period Barrier

rcu_read_lock_bh call_rcu_bh rcu_barrier_bh

rcu_read_unlock_bh synchronize_rcu_bh

rcu_dereference_bh synchronize_rcu_bh_expedited

rcu_dereference_bh_check

rcu_dereference_bh_protected

rcu_read_lock_bh_held

sched: Critical sections Grace period Barrier

rcu_read_lock_sched synchronize_sched rcu_barrier_sched

rcu_read_unlock_sched call_rcu_sched

[preempt_disable] synchronize_sched_expedited

[and friends]

rcu_read_lock_sched_notrace

rcu_read_unlock_sched_notrace

rcu_dereference_sched

rcu_dereference_sched_check

rcu_dereference_sched_protected

rcu_read_lock_sched_held

SRCU: Critical sections Grace period Barrier

srcu_read_lock synchronize_srcu srcu_barrier

srcu_read_unlock call_srcu

srcu_dereference synchronize_srcu_expedited

srcu_dereference_check

srcu_read_lock_held

SRCU: Initialization/cleanup

init_srcu_struct

cleanup_srcu_struct

All: lockdep-checked RCU-protected pointer access

rcu_access_pointer

rcu_dereference_raw

RCU_LOCKDEP_WARN

rcu_sleep_check

RCU_NONIDLE

See the comment headers in the source code (or the docbook generated

from them) for more information.

However, given that there are no fewer than four families of RCU APIs

in the Linux kernel, how do you choose which one to use? The following

list can be helpful:

a. Will readers need to block? If so, you need SRCU.

b. What about the -rt patchset? If readers would need to block

in an non-rt kernel, you need SRCU. If readers would block

in a -rt kernel, but not in a non-rt kernel, SRCU is not

necessary.

c. Do you need to treat NMI handlers, hardirq handlers,

and code segments with preemption disabled (whether

via preempt_disable(), local_irq_save(), local_bh_disable(),

or some other mechanism) as if they were explicit RCU readers?

If so, RCU-sched is the only choice that will work for you.

d. Do you need RCU grace periods to complete even in the face

of softirq monopolization of one or more of the CPUs? For

example, is your code subject to network-based denial-of-service

attacks? If so, you need RCU-bh.

e. Is your workload too update-intensive for normal use of

RCU, but inappropriate for other synchronization mechanisms?

If so, consider SLAB_DESTROY_BY_RCU. But please be careful!

f. Do you need read-side critical sections that are respected

even though they are in the middle of the idle loop, during

user-mode execution, or on an offlined CPU? If so, SRCU is the

only choice that will work for you.

g. Otherwise, use RCU.

Of course, this all assumes that you have determined that RCU is in fact

the right tool for your job.

8. ANSWERS TO QUICK QUIZZES

Quick Quiz #1: Why is this argument naive? How could a deadlock

occur when using this algorithm in a real-world Linux

kernel? [Referring to the lock-based "toy" RCU

algorithm.]

Answer: Consider the following sequence of events:

1. CPU 0 acquires some unrelated lock, call it

"problematic_lock", disabling irq via

spin_lock_irqsave().

2. CPU 1 enters synchronize_rcu(), write-acquiring

rcu_gp_mutex.

3. CPU 0 enters rcu_read_lock(), but must wait

because CPU 1 holds rcu_gp_mutex.

4. CPU 1 is interrupted, and the irq handler

attempts to acquire problematic_lock.

The system is now deadlocked.

One way to avoid this deadlock is to use an approach like

that of CONFIG_PREEMPT_RT, where all normal spinlocks

become blocking locks, and all irq handlers execute in

the context of special tasks. In this case, in step 4

above, the irq handler would block, allowing CPU 1 to

release rcu_gp_mutex, avoiding the deadlock.

Even in the absence of deadlock, this RCU implementation

allows latency to "bleed" from readers to other

readers through synchronize_rcu(). To see this,

consider task A in an RCU read-side critical section

(thus read-holding rcu_gp_mutex), task B blocked

attempting to write-acquire rcu_gp_mutex, and

task C blocked in rcu_read_lock() attempting to

read_acquire rcu_gp_mutex. Task A's RCU read-side

latency is holding up task C, albeit indirectly via

task B.

Realtime RCU implementations therefore use a counter-based

approach where tasks in RCU read-side critical sections

cannot be blocked by tasks executing synchronize_rcu().

Quick Quiz #2: Give an example where Classic RCU's read-side

overhead is -negative-.

Answer: Imagine a single-CPU system with a non-CONFIG_PREEMPT

kernel where a routing table is used by process-context

code, but can be updated by irq-context code (for example,

by an "ICMP REDIRECT" packet). The usual way of handling

this would be to have the process-context code disable

interrupts while searching the routing table. Use of

RCU allows such interrupt-disabling to be dispensed with.

Thus, without RCU, you pay the cost of disabling interrupts,

and with RCU you don't.

One can argue that the overhead of RCU in this

case is negative with respect to the single-CPU

interrupt-disabling approach. Others might argue that

the overhead of RCU is merely zero, and that replacing

the positive overhead of the interrupt-disabling scheme

with the zero-overhead RCU scheme does not constitute

negative overhead.

In real life, of course, things are more complex. But

even the theoretical possibility of negative overhead for

a synchronization primitive is a bit unexpected. ;-)

Quick Quiz #3: If it is illegal to block in an RCU read-side

critical section, what the heck do you do in

PREEMPT_RT, where normal spinlocks can block???

Answer: Just as PREEMPT_RT permits preemption of spinlock

critical sections, it permits preemption of RCU

read-side critical sections. It also permits

spinlocks blocking while in RCU read-side critical

sections.

Why the apparent inconsistency? Because it is it

possible to use priority boosting to keep the RCU

grace periods short if need be (for example, if running

short of memory). In contrast, if blocking waiting

for (say) network reception, there is no way to know

what should be boosted. Especially given that the

process we need to boost might well be a human being

who just went out for a pizza or something. And although

a computer-operated cattle prod might arouse serious

interest, it might also provoke serious objections.

Besides, how does the computer know what pizza parlor

the human being went to???

ACKNOWLEDGEMENTS

My thanks to the people who helped make this human-readable, including

Jon Walpole, Josh Triplett, Serge Hallyn, Suzanne Wood, and Alan Stern.

For more information, see http://www.rdrop.com/users/paulmck/RCU.

*/

Using RCU to Protect Read-Mostly Linked Lists

One of the best applications of RCU is to protect read-mostly linked lists

("struct list_head" in list.h). One big advantage of this approach

is that all of the required memory barriers are included for you in

the list macros. This document describes several applications of RCU,

with the best fits first.

Example 1: Read-Side Action Taken Outside of Lock, No In-Place Updates

The best applications are cases where, if reader-writer locking were

used, the read-side lock would be dropped before taking any action

based on the results of the search. The most celebrated example is

the routing table. Because the routing table is tracking the state of

equipment outside of the computer, it will at times contain stale data.

Therefore, once the route has been computed, there is no need to hold

the routing table static during transmission of the packet. After all,

you can hold the routing table static all you want, but that won't keep

the external Internet from changing, and it is the state of the external

Internet that really matters. In addition, routing entries are typically

added or deleted, rather than being modified in place.

A straightforward example of this use of RCU may be found in the

system-call auditing support. For example, a reader-writer locked

implementation of audit_filter_task() might be as follows:

static enum audit_state audit_filter_task(struct task_struct *tsk)

{

struct audit_entry *e;

enum audit_state state;

read_lock(&auditsc_lock);

/* Note: audit_netlink_sem held by caller. */

list_for_each_entry(e, &audit_tsklist, list) {

if (audit_filter_rules(tsk, &e->rule, NULL, &state)) {

read_unlock(&auditsc_lock);

return state;

}

}

read_unlock(&auditsc_lock);

return AUDIT_BUILD_CONTEXT;

}

Here the list is searched under the lock, but the lock is dropped before

the corresponding value is returned. By the time that this value is acted

on, the list may well have been modified. This makes sense, since if

you are turning auditing off, it is OK to audit a few extra system calls.

This means that RCU can be easily applied to the read side, as follows:

static enum audit_state audit_filter_task(struct task_struct *tsk)

{

struct audit_entry *e;

enum audit_state state;

rcu_read_lock();

/* Note: audit_netlink_sem held by caller. */

list_for_each_entry_rcu(e, &audit_tsklist, list) {

if (audit_filter_rules(tsk, &e->rule, NULL, &state)) {

rcu_read_unlock();

return state;

}

}

rcu_read_unlock();

return AUDIT_BUILD_CONTEXT;

}

The read_lock() and read_unlock() calls have become rcu_read_lock()

and rcu_read_unlock(), respectively, and the list_for_each_entry() has

become list_for_each_entry_rcu(). The _rcu() list-traversal primitives

insert the read-side memory barriers that are required on DEC Alpha CPUs.

The changes to the update side are also straightforward. A reader-writer

lock might be used as follows for deletion and insertion:

static inline int audit_del_rule(struct audit_rule *rule,

struct list_head *list)

{

struct audit_entry *e;

write_lock(&auditsc_lock);

list_for_each_entry(e, list, list) {

if (!audit_compare_rule(rule, &e->rule)) {

list_del(&e->list);

write_unlock(&auditsc_lock);

return 0;

}

}

write_unlock(&auditsc_lock);

return -EFAULT; /* No matching rule */

}

static inline int audit_add_rule(struct audit_entry *entry,

struct list_head *list)

{

write_lock(&auditsc_lock);

if (entry->rule.flags & AUDIT_PREPEND) {

entry->rule.flags &= ~AUDIT_PREPEND;

list_add(&entry->list, list);

} else {

list_add_tail(&entry->list, list);

}

write_unlock(&auditsc_lock);

return 0;

}

Following are the RCU equivalents for these two functions:

static inline int audit_del_rule(struct audit_rule *rule,

struct list_head *list)

{

struct audit_entry *e;

/* Do not use the _rcu iterator here, since this is the only

* deletion routine. */

list_for_each_entry(e, list, list) {

if (!audit_compare_rule(rule, &e->rule)) {

list_del_rcu(&e->list);

call_rcu(&e->rcu, audit_free_rule);

return 0;

}

}

return -EFAULT; /* No matching rule */

}

static inline int audit_add_rule(struct audit_entry *entry,

struct list_head *list)

{

if (entry->rule.flags & AUDIT_PREPEND) {

entry->rule.flags &= ~AUDIT_PREPEND;

list_add_rcu(&entry->list, list);

} else {

list_add_tail_rcu(&entry->list, list);

}

return 0;

}

Normally, the write_lock() and write_unlock() would be replaced by

a spin_lock() and a spin_unlock(), but in this case, all callers hold

audit_netlink_sem, so no additional locking is required. The auditsc_lock

can therefore be eliminated, since use of RCU eliminates the need for

writers to exclude readers. Normally, the write_lock() calls would

be converted into spin_lock() calls.

The list_del(), list_add(), and list_add_tail() primitives have been

replaced by list_del_rcu(), list_add_rcu(), and list_add_tail_rcu().

The _rcu() list-manipulation primitives add memory barriers that are

needed on weakly ordered CPUs (most of them!). The list_del_rcu()

primitive omits the pointer poisoning debug-assist code that would

otherwise cause concurrent readers to fail spectacularly.

So, when readers can tolerate stale data and when entries are either added

or deleted, without in-place modification, it is very easy to use RCU!

Example 2: Handling In-Place Updates

The system-call auditing code does not update auditing rules in place.

However, if it did, reader-writer-locked code to do so might look as

follows (presumably, the field_count is only permitted to decrease,

otherwise, the added fields would need to be filled in):

static inline int audit_upd_rule(struct audit_rule *rule,

struct list_head *list,

__u32 newaction,

__u32 newfield_count)

{

struct audit_entry *e;

struct audit_newentry *ne;

write_lock(&auditsc_lock);

/* Note: audit_netlink_sem held by caller. */

list_for_each_entry(e, list, list) {

if (!audit_compare_rule(rule, &e->rule)) {

e->rule.action = newaction;

e->rule.file_count = newfield_count;

write_unlock(&auditsc_lock);

return 0;

}

}

write_unlock(&auditsc_lock);

return -EFAULT; /* No matching rule */

}

The RCU version creates a copy, updates the copy, then replaces the old

entry with the newly updated entry. This sequence of actions, allowing

concurrent reads while doing a copy to perform an update, is what gives

RCU ("read-copy update") its name. The RCU code is as follows:

static inline int audit_upd_rule(struct audit_rule *rule,

struct list_head *list,

__u32 newaction,

__u32 newfield_count)

{

struct audit_entry *e;

struct audit_newentry *ne;

list_for_each_entry(e, list, list) {

if (!audit_compare_rule(rule, &e->rule)) {

ne = kmalloc(sizeof(*entry), GFP_ATOMIC);

if (ne == NULL)

return -ENOMEM;

audit_copy_rule(&ne->rule, &e->rule);

ne->rule.action = newaction;

ne->rule.file_count = newfield_count;

list_replace_rcu(&e->list, &ne->list);

call_rcu(&e->rcu, audit_free_rule);

return 0;

}

}

return -EFAULT; /* No matching rule */

}

Again, this assumes that the caller holds audit_netlink_sem. Normally,

the reader-writer lock would become a spinlock in this sort of code.

Example 3: Eliminating Stale Data

The auditing examples above tolerate stale data, as do most algorithms

that are tracking external state. Because there is a delay from the

time the external state changes before Linux becomes aware of the change,

additional RCU-induced staleness is normally not a problem.

However, there are many examples where stale data cannot be tolerated.

One example in the Linux kernel is the System V IPC (see the ipc_lock()

function in ipc/util.c). This code checks a "deleted" flag under a

per-entry spinlock, and, if the "deleted" flag is set, pretends that the

entry does not exist. For this to be helpful, the search function must

return holding the per-entry spinlock, as ipc_lock() does in fact do.

Quick Quiz: Why does the search function need to return holding the

per-entry lock for this deleted-flag technique to be helpful?

If the system-call audit module were to ever need to reject stale data,

one way to accomplish this would be to add a "deleted" flag and a "lock"

spinlock to the audit_entry structure, and modify audit_filter_task()

as follows:

static enum audit_state audit_filter_task(struct task_struct *tsk)

{

struct audit_entry *e;

enum audit_state state;

rcu_read_lock();

list_for_each_entry_rcu(e, &audit_tsklist, list) {

if (audit_filter_rules(tsk, &e->rule, NULL, &state)) {

spin_lock(&e->lock);

if (e->deleted) {

spin_unlock(&e->lock);

rcu_read_unlock();

return AUDIT_BUILD_CONTEXT;

}

rcu_read_unlock();

return state;

}

}

rcu_read_unlock();

return AUDIT_BUILD_CONTEXT;

}

Note that this example assumes that entries are only added and deleted.

Additional mechanism is required to deal correctly with the

update-in-place performed by audit_upd_rule(). For one thing,

audit_upd_rule() would need additional memory barriers to ensure

that the list_add_rcu() was really executed before the list_del_rcu().

The audit_del_rule() function would need to set the "deleted"

flag under the spinlock as follows:

static inline int audit_del_rule(struct audit_rule *rule,

struct list_head *list)

{

struct audit_entry *e;

/* Do not need to use the _rcu iterator here, since this

* is the only deletion routine. */

list_for_each_entry(e, list, list) {

if (!audit_compare_rule(rule, &e->rule)) {

spin_lock(&e->lock);

list_del_rcu(&e->list);

e->deleted = 1;

spin_unlock(&e->lock);

call_rcu(&e->rcu, audit_free_rule);

return 0;

}

}

return -EFAULT; /* No matching rule */

}

Summary

Read-mostly list-based data structures that can tolerate stale data are

the most amenable to use of RCU. The simplest case is where entries are

either added or deleted from the data structure (or atomically modified

in place), but non-atomic in-place modifications can be handled by making

a copy, updating the copy, then replacing the original with the copy.

If stale data cannot be tolerated, then a "deleted" flag may be used

in conjunction with a per-entry spinlock in order to allow the search

function to reject newly deleted data.

Answer to Quick Quiz

Why does the search function need to return holding the per-entry

lock for this deleted-flag technique to be helpful?

If the search function drops the per-entry lock before returning,

then the caller will be processing stale data in any case. If it

is really OK to be processing stale data, then you don't need a

"deleted" flag. If processing stale data really is a problem,

then you need to hold the per-entry lock across all of the code

that uses the value that was returned.

在使用RCU时,对共享资源的访问在大部分时间应该是只读的,写访问应该相对较少,因为写访问多了必然相对于其他锁机制而已更占系统资源,影响效率。其次是读者在持有rcu_read_lock(RCU读锁定函数)的时候,不能发生进程上下文切换,否则,因为写者需要等待读者完成方可进行,则此时写者进程也会一直被阻塞,影响系统的正常运行。再次写者执行完毕后需要调用回调函数,此时发生上下文切换,当前进程进入睡眠,则系统将一直不能调用回调函数,更槽糕的是,此时其它进程若再去执行共享的临界区,必然造成一定的错误。最后一点是受RCU机制保护的资源必须是通过指针访问。因为从RCU机制上看,几乎所有操作都是针对指针数据的;

同步函数最为重要,即synchronize_rcu()。读者函数的实质其实很简单:禁止抢占,也就是说在RCU期间不允许发生进程上下文切换,原因上述已提及,即是写者需要等待读者完成方可进行,则此时写者进程也会一直被阻塞,影响系统的正常运行等,故而不允许在RCU期间发生进程上下文切换

关于写者函数,主要就是call_rcu和call_rcu_bh两个函数。其中call_rcu能实现的功能是它不会使写者阻塞,因而它可在中断上下文及软中断使用,该函数将函数func挂接到RCU的回调函数链表上,然后立即返回,读者函数中提及的synchronize_rcu()函数在实现时也调用了该函数。而call_rcu_bh函数实现的功能几乎与call_rcu完全相同,唯一的差别是它将软中断的完成当作经历一个quiescent state(静默状态,本节一开始有提及这个概念), 因此若写者使用了该函数,那么读者需对应的使用rcu_read_lock_bh() 和rcu_read_unlock_bh()。

使用rcu_read_lock_bh() 和rcu_read_unlock_bh()函数的原因是由于call_rcu_bh函数不会使写者阻塞,可在中断上下文及软中断使用。这表明此时系统中的中断和软中断并没有被关闭。那么写者在调用call_rcu_bh函数访问临界区时,RCU机制下的读者也能访问临界区。此时对于读者而言,它若是需要读取临界区的内容,它必须把软中断关闭,以免读者在当前的进程上下文过程中被软中断打断(上述内容提过软中断可以打断当前的进程上下文)。而rcu_read_lock_bh() 和rcu_read_unlock_bh()函数的实质是调用local_bh_disable()和local_bh_enable()函数,显然这是实现了禁止软中断和使能软中断的功能。

另外在Linux源码中关于call_rcu_bh函数的注释中还明确说明了如果当前的进程是在中断上下文中,则需要执行rcu_read_lock()和rcu_read_unlock(),结合这两个函数的实现实质表明它实际上禁止或使能内核的抢占调度,原因不言而喻,避免当前进程在执行读写过程中被其它进程抢占。同时内核注释还表明call_rcu_bh这个接口函数的使用条件是在大部分的读临界区操作发生在软中断上下文中,原因还是需从它实现的功能出发,相信很容易理解,主要是要从执行效率方面考虑。

static inline void rcu_read_lock_bh(void);

static inline void rcu_read_unlock_bh(void);

这个变种只在修改是通过 call_rcu_bh进行的情况下使用,因为 call_rcu_bh将把 softirq 的执行完毕也认为是一个 quiescent state,因此如果修改是通过 call_rcu_bh 进行的,在进程上下文的读端临界区必须使用这一变种

每一个 CPU 维护两个数据结构 rcu_sched_data,rcu_bh_data,它们用于保存回调函数。函数call_rcu和函数call_rcu_bh用于注册回调函数,前者把回调函数注册到rcu_sched_data,而后者则把回调函数注册到rcu_bh_data,在每一个数据结构上,回调函数被组成一个链表,先注册的排在前头,后注册的排在末尾;时钟中断处理函数(update_process_times)调用函数rcu_check_callbacks

函数rcu_check_callbacks首先检查该CPU是否经历了一个quiescent state,如果(或):

- 当前进程运行在用户态;

- 当前进程为idle且当前不处在运行softirq状态,也不处在运行IRQ处理函数的状态;

该CPU已经经历了一个quiescent state,因此通过调用函数rcu_sched_qs和rcu_bh_qs标记该CPU的数据结构rcu_sched_data和rcu_bh_data的标记字段passed_quiesc,以记录该CPU已经经历一个quiescent state。

否则,如果当前不处在运行softirq状态,那么,只标记该CPU的数据结构rcu_bh_data的标记字段passed_quiesc,以记录该CPU已经经历一个quiescent state。注意,该标记只对rcu_bh_data有效。

然后,函数rcu_check_callbacks将调用开启RCU_SOFTIRQ。

synchronize_rcu()在RCU中是一个最核心的函数,它用来等待之前的读者全部退出。

在完整的宽限期结束后,即在所有当前正在执行的RCU读取端临界区完成之后,控制权会在一段时间后返回给调用者。

免责声明:本文仅代表文章作者的个人观点,与本站无关。其原创性、真实性以及文中陈述文字和内容未经本站证实,对本文以及其中全部或者部分内容文字的真实性、完整性和原创性本站不作任何保证或承诺,请读者仅作参考,并自行核实相关内容。文章投诉邮箱:anhduc.ph@yahoo.com