kafka源码全面讲解(Kafka核心源码剖析系列)

数据与智能 出版了专著「构建企业级推荐系统:算法、工程实现与案例分析」。每周输出7篇推荐系统、数据分析、大数据、AI原创文章。「数据与智能」(同名视频号、知乎、头条、B站、快手、抖音、小红书等自媒体平台号) 社区,聚焦数据、智能领域的知识分享与传播。

作者 | 吴邪 大数据4年从业经验,目前就职于广州一家互联网公司,负责大数据基础平台自研、离线计算&实时计算研究

编辑 | auroral-L

全文共11194字,预计阅读70分钟。

第三章 Kafka 消息发送线程及网络通信

1. Sender 线程

2. NetworkClient

2.1 Selectable

2.2 MetadataUpdater

2.3 InFlightRequests

2.4 NetworkClient

3. 小结

回顾一下前面提到的发送消息的时序图,上一节说到了Kafka相关的元数据信息以及消息的封装,消息封装完成之后就开始将消息发送出去,这个任务由Sender线程来实现。

1. Sender线程

找到kafkaProducer这个对象,KafkaProducer的构造函数中有这样几行代码。

this.accumulator = new

RecordAccumulator(config.getInt(ProducerConfig.BATCH_SIZE_CONFIG),

this.totalMemorySize,

this.compressionType,

config.getLong(ProducerConfig.LINGER_MS_CONFIG),

retryBackoffMs,

metrics,

time);

构造了RecordAccumulator对象,设置了该对象中每个消息批次的大小、缓冲区大小、压缩格式等等。

紧接着就构建了一个非常重要的组件NetworkClient,用作发送消息的载体。

NetworkClient client = new NetworkClient(

new Selector(config.getLong(ProducerConfig.CONNECTIONS_MAX_IDLE_MS_CONFIG), this.metrics, time, "producer", channelBuilder),

this.Metadata,

clientId,

config.getInt(ProducerConfig.MAX_IN_FLIGHT_REQUESTS_PER_CONNECTION),

config.getLong(ProducerConfig.RECONNECT_BACKOFF_MS_CONFIG),

config.getInt(ProducerConfig.SEND_BUFFER_CONFIG),

config.getInt(ProducerConfig.RECEIVE_BUFFER_CONFIG),

this.requestTimeoutMs, time);

对于构建的NetworkClient,有几个重要的参数要注意一下:

√ connections.max.idle.ms: 表示一个网络连接最多空闲多久,超过这个空闲时间,就关闭这个网络连接,默认是9分钟。

√ max.in.flight.requests.per.connection:表示每个网络连接可以容忍 producer端发送给broker 消息然后消息没有响应的个数,默认是5个。(ps:producer向broker发送数据的时候,其实是存在多个网络连接)

√ send.buffer.bytes:socket发送数据的缓冲区的大小,默认值是128K。

√ receive.buffer.bytes:socket接受数据的缓冲区的大小,默认值是32K。

构建好消息发送的网络通道直到启动Sender线程,用于发送消息。

this.sender = new Sender(client,

this.metadata,

this.accumulator,

config.getInt(ProducerConfig.MAX_IN_FLIGHT_REQUESTS_PER_CONNECTION) == 1,

config.getInt(ProducerConfig.MAX_REQUEST_SIZE_CONFIG),

(short) parseAcks(config.getString(ProducerConfig.ACKS_CONFIG)),

config.getInt(ProducerConfig.RETRIES_CONFIG),

this.metrics,

new SystemTime(),

clientId,

this.requestTimeoutMs);

//默认的线程名前缀为kafka-producer-network-thread,其中clientId是生产者的id

String ioThreadName = "kafka-producer-network-thread" (clientId.length() > 0 ? " | " clientId : "");

//创建了一个守护线程,将Sender对象传进去。

this.ioThread = new KafkaThread(ioThreadName, this.sender, true);

//启动线程

this.ioThread.start();

看到这里就非常明确了,既然是线程,那么肯定有run()方法,我们重点关注该方法中的实现逻辑,在这里要补充一点值得我们借鉴的线程使用模式,可以看到在创建sender线程后,并没有立即启动sender线程,而且新创建了KafkaThread线程,将sender对象给传进去了,然后再启动KafkaThread线程,相信有不少小伙伴会有疑惑,我们进去KafkaThread这个类看一下其中的内容。

/**

* A wrapper for Thread that sets things up nicely

*/

public class KafkaThread extends Thread {

private final Logger log = LoggerFactory.getLogger(getClass());

public KafkaThread(final String name, Runnable runnable, boolean daemon) {

super(runnable, name);

//设置为后台守护线程

setDaemon(daemon);

setUncaughtExceptionHandler(new Thread.UncaughtExceptionHandler() {

public void uncaughtException(Thread t, Throwable e) {

log.error("Uncaught exception in " name ": ", e);

}

});

}

}

发现KafkaThread线程其实只是启动了一个守护线程,那么这样做的好处是什么呢?答案是可以将业务代码和线程本身解耦,复杂的业务逻辑可以在KafkaThread这样的线程中去实现,这样在代码层面上Sender线程就非常的简洁,可读性也比较高。

先看一下Sender这个对象的构造。

/**

* 主要的功能就是用处理向 Kafka 集群发送生产请求的后台线程,更新元数据信息,以及将消息发送到合适的节点

* The background thread that handles the sending of produce requests to the Kafka Cluster. This thread makes metadata

* requests to renew its view of the cluster and then sends produce requests to the appropriate nodes.

*/

public class Sender implements Runnable {

private static final Logger log = LoggerFactory.getLogger(Sender.class);

//kafka网络通信客户端,主要用于与broker的网络通信

private final KafkaClient client;

//消息累加器,包含了批量的消息记录

private final RecordAccumulator accumulator;

//客户端元数据信息

private final Metadata metadata;

/* the flag indicating whether the producer should guarantee the message order on the broker or not. */

//保证消息的顺序性的标记

private final boolean guaranteeMessageOrder;

/* the maximum request size to attempt to send to the server */

//对应的配置是max.request.size,代表调用send()方法发送的最大请求大小

private final int maxRequestSize;

/* the number of acknowledgements to request from the server */

//用于保证消息发送状态,分别有-1,0,1三种选项

private final short acks;

/* the number of times to retry a failed request before giving up */

//请求失败重试的次数

private final int retries;

/* the clock instance used for getting the time */

//时间工具,计算时间,没有特殊含义

private final Time time;

/* true while the sender thread is still running */

//表示线程状态,true则表示running

private volatile boolean running;

/* true when the caller wants to ignore all unsent/inflight messages and force close. */

//强制关闭消息发送的标识,一旦设置为true,则不管消息有没有发送成功都会忽略

private volatile boolean forceClose;

/* metrics */

//发送指标收集

private final SenderMetrics sensors;

/* param clientId of the client */

//生产者客户端id

private String clientId;

/* the max time to wait for the server to respond to the request*/

//请求超时时间

private final int requestTimeout;

//构造器

public Sender(KafkaClient client,

Metadata metadata,

RecordAccumulator accumulator,

boolean guaranteeMessageOrder,

int maxRequestSize,

short acks,

int retries,

Metrics metrics,

Time time,

String clientId,

int requestTimeout) {

this.client = client;

this.accumulator = accumulator;

this.metadata = metadata;

this.guaranteeMessageOrder = guaranteeMessageOrder;

this.maxRequestSize = maxRequestSize;

this.running = true;

this.acks = acks;

this.retries = retries;

this.time = time;

this.clientId = clientId;

this.sensors = new SenderMetrics(metrics);

this.requestTimeout = requestTimeout;

}

....

}

大概了解完Sender对象的初始化参数之后,开始步入正题,找到Sender对象中的run()方法。

/**

* The main run loop for the sender thread

*/

public void run() {

log.debug("Starting Kafka producer I/O thread.");

//sender线程启动起来了以后就是处于一直运行的状态

while (running) {

try {

//核心代码

run(time.milliseconds());

} catch (Exception e) {

log.error("Uncaught error in kafka producer I/O thread: ", e);

}

}

log.debug("Beginning shutdown of Kafka producer I/O thread, sending remaining records.");

// okay we stopped accepting requests but there may still be

// requests in the accumulator or waiting for acknowledgment,

// wait until these are completed.

while (!forceClose && (this.accumulator.hasUnsent() || this.client.inFlightRequestCount() > 0)) {

try {

run(time.milliseconds());

} catch (Exception e) {

log.error("Uncaught error in kafka producer I/O thread: ", e);

}

}

if (forceClose) {

// We need to fail all the incomplete batches and wake up the threads waiting on

// the futures.

this.accumulator.abortIncompleteBatches();

}

try {

this.client.close();

} catch (Exception e) {

log.error("Failed to close network client", e);

}

log.debug("Shutdown of Kafka producer I/O thread has completed.");

}

以上的run()方法中,出现了两个while判断,本意都是为了保持线程的不间断运行,将消息发送到broker,两处都调用了另外的一个带时间参数的run(xx)重载方法,第一个run(ts)方法是为了将消息缓存区中的消息发送给broker,第二个run(ts)方法会先判断线程是否强制关闭,如果没有强制关闭,则会将消息缓存区中未发送出去的消息发送完毕,然后才退出线程。

/**

* Run a single iteration of sending

*

* @param now

* The current POSIX time in milliseconds

*/

void run(long now) {

//第一步,获取元数据

Cluster cluster = metadata.fetch();

// get the list of partitions with data ready to send

//第二步,判断哪些partition满足发送条件

RecordAccumulator.ReadyCheckResult result = this.accumulator.ready(cluster, now);

/**

* 第三步,标识还没有拉取到元数据的topic

*/

if (!result.unknownLeaderTopics.isEmpty()) {

// The set of topics with unknown leader contains topics with leader election pending as well as

// topics which may have expired. Add the topic again to metadata to ensure it is included

// and request metadata update, since there are messages to send to the topic.

for (String topic : result.unknownLeaderTopics)

this.metadata.add(topic);

this.metadata.requestUpdate();

}

// remove any nodes we aren't ready to send to

Iterator<Node> iter = result.readyNodes.iterator();

long notReadyTimeout = Long.MAX_VALUE;

while (iter.hasNext()) {

Node node = iter.next();

/**

* 第四步,检查与要发送数据的主机的网络是否已经建立好。

*/

//如果返回的是false

if (!this.client.ready(node, now)) {

//移除result 里面要发送消息的主机。

//所以我们会看到这儿所有的主机都会被移除

iter.remove();

notReadyTimeout = Math.min(notReadyTimeout, this.client.connectionDelay(node, now));

}

}

/**

* 第五步,有可能我们要发送的partition有很多个,这种情况下,有可能会存在这样的情况

* 部分partition的leader partition分布在同一台服务器上面。

*

*

*/

Map<Integer, List<RecordBatch>> batches = this.accumulator.drain(cluster,

result.readyNodes,

this.maxRequestSize,

now);

if (guaranteeMessageOrder) {

// Mute all the partitions drained

//如果batches空的话,跳过不执行。

for (List<RecordBatch> batchList : batches.values()) {

for (RecordBatch batch : batchList)

this.accumulator.mutePartition(batch.topicPartition);

}

}

/**

* 第六步,处理超时的批次

*

*/

List<RecordBatch> expiredBatches = this.accumulator.abortExpiredBatches(this.requestTimeout, now);

// update sensors

for (RecordBatch expiredBatch : expiredBatches)

this.sensors.recordErrors(expiredBatch.topicPartition.topic(), expiredBatch.recordCount);

sensors.updateProduceRequestMetrics(batches);

/**

* 第七步,创建发送消息的请求,以批的形式发送,可以减少网络传输成本,提高吞吐

*/

List<ClientRequest> requests = createProduceRequests(batches, now);

// If we have any nodes that are ready to send have sendable data, poll with 0 timeout so this can immediately

// loop and try sending more data. Otherwise, the timeout is determined by nodes that have partitions with data

// that isn't yet sendable (e.g. lingering, backing off). Note that this specifically does not include nodes

// with sendable data that aren't ready to send since they would cause busy looping.

long pollTimeout = Math.min(result.nextReadyCheckDelayMs, notReadyTimeout);

if (result.readyNodes.size() > 0) {

log.trace("Nodes with data ready to send: {}", result.readyNodes);

log.trace("Created {} produce requests: {}", requests.size(), requests);

pollTimeout = 0;

}

//发送请求的操作

for (ClientRequest request : requests)

//绑定 op_write

client.send(request, now);

// if some partitions are already ready to be sent, the select time would be 0;

// otherwise if some partition already has some data accumulated but not ready yet,

// the select time will be the time difference between now and its linger expiry time;

// otherwise the select time will be the time difference between now and the metadata expiry time;

/**

* 第八步,真正执行网络操作的都是这个NetWordClient这个组件

* 包括:发送请求,接受响应(处理响应)

this.client.poll(pollTimeout, now);

}

以上的run(long)方法执行过程总结为下面几个步骤:

1. 获取集群元数据信息

2. 调用RecordAccumulator的ready()方法,判断当前时间戳哪些partition是可以进行发送,以及获取partition 的leader partition的元数据信息,得知哪些节点是可以接收消息的

3. 标记还没有拉到元数据的topic,如果缓存中存在标识为unknownLeaderTopics的topic信息,则将这些topic添加到metadata中,然后调用metadata的requestUpdate()方法,请求更新元数据

4. 将不需要接收消息的节点从按步骤而返回的结果中删除,只对准备接收消息的节点readyNode进行遍历,检查与要发送的节点的网络是否已经建立好,不符合发送条件的节点都会从readyNode中移除掉

5. 针对以上建立好网络连接的节点集合,调用RecordAccumulator的drain()方法,得到等待发送的消息批次集合

6. 处理超时发送的消息,调用RecordAccumulator的addExpiredBatches()方法,循环遍历RecordBatch,判断其中的消息是否超时,如果超时则从队列中移除,释放资源空间

7. 创建发送消息的请求,调用createProducerRequest方法,将消息批次封装成ClientRequest对象,因为批次通常是多个的,所以返回一个List<ClientRequest>集合

8. 调用NetworkClient的send()方法,绑定KafkaChannel的op_write操作

9. 调用NetworkClient的poll()方法拉取元数据信息,建立连接,执行网络请求,接收响应,完成消息发送

以上就是Sender线程对消息以及集群元数据所发生的核心过程。其中就涉及到了另外一个核心组件NetworkClient。

2. NetworkClient

NetworkClient是消息发送的介质,不管是生产者发送消息,还是消费者接收消息,都需要依赖于NetworkClient建立网络连接。同样的,我们先了解NetworkClient的组成部分,主要涉及NIO的一些知识,有兴趣的童鞋可以看看NIO的原理和组成。

/**

* A network client for asynchronous request/response network i/o. This is an internal class used to implement the

* user-facing producer and consumer clients.

* <p>

* This class is not thread-safe!

*/

public class NetworkClient implements KafkaClient

{

private static final Logger log = LoggerFactory.getLogger(NetworkClient.class);

/* the selector used to perform network i/o */

//java NIO Selector

private final Selectable selector;

private final MetadataUpdater metadataUpdater;

private final Random randOffset;

/* the state of each node's connection */

private final ClusterConnectionStates connectionStates;

/* the set of requests currently being sent or awaiting a response */

private final InFlightRequests inFlightRequests;

/* the socket send buffer size in bytes */

private final int socketSendBuffer;

/* the socket receive size buffer in bytes */

private final int socketReceiveBuffer;

/* the client id used to identify this client in requests to the server */

private final String clientId;

/* the current correlation id to use when sending requests to servers */

private int correlation;

/* max time in ms for the producer to wait for acknowledgement from server*/

private final int requestTimeoutMs;

private final Time time;

......

}

可以看到NetworkClient实现了KafkaClient接口,包括了几个核心类Selectable、MetadataUpdater、ClusterConnectionStates、InFlightRequests。

2.1 Selectable

其中Selectable是实现异步非阻塞网络IO的接口,通过类的注释可以知道Selectable可以使用单个线程来管理多个网络连接,包括读、写、连接等操作,这个和NIO是一致的。

我们先看看Selectable的实现类Selector,是org.apache.kafka.common.network包下的,源码内容比较多,挑相对比较重要的看。

public class Selector implements Selectable {

public static final long NO_IDLE_TIMEOUT_MS = -1;

private static final Logger log = LoggerFactory.getLogger(Selector.class);

//这个对象就是javaNIO里面的Selector

//Selector是负责网络的建立,发送网络请求,处理实际的网络IO。

//可以算是最核心的一个组件。

private final java.nio.channels.Selector nioSelector;

//broker 和 KafkaChannel(SocketChnnel)的映射

//这儿的kafkaChannel大家暂时可以理解为就是SocketChannel

//维护NodeId和KafkaChannel的映射关系

private final Map<String, KafkaChannel> channels;

//记录已经完成发送的请求

private final List<Send> completedSends;

//记录已经接收到的,并且处理完了的响应。

private final List<NetworkReceive> completedReceives;

//已经接收到了,但是还没来得及处理的响应。

//一个连接,对应一个响应队列

private final Map<KafkaChannel, Deque<NetworkReceive>> stagedReceives;

private final Set<SelectionKey> immediatelyConnectedKeys;

//没有建立连接或者或者端口连接的主机

private final List<String> disconnected;

//完成建立连接的主机

private final List<String> connected;

//建立连接失败的主机。

private final List<String> failedSends;

private final Time time;

private final SelectorMetrics sensors;

private final String metricGrpPrefix;

private final Map<String, String> metricTags;

//用于创建KafkaChannel的Builder

private final ChannelBuilder channelBuilder;

private final int maxReceiveSize;

private final boolean metricsPerConnection;

private final IdleExpiryManager idleExpiryManager;

发起网络请求的第一步是连接、注册事件、发送、消息处理,涉及几个核心方法

1. 连接connect()方法

/**

* Begin connecting to the given address and add the connection to this nioSelector associated with the given id

* number.

* <p>

* Note that this call only initiates the connection, which will be completed on a future {@link #poll(long)}

* call. Check {@link #connected()} to see which (if any) connections have completed after a given poll call.

* @param id The id for the new connection

* @param address The address to connect to

* @param sendBufferSize The send buffer for the new connection

* @param receiveBufferSize The receive buffer for the new connection

* @throws IllegalStateException if there is already a connection for that id

* @throws IOException if DNS resolution fails on the hostname or if the broker is down

*/

@Override

public void connect(String id, InetSocketAddress address, int sendBufferSize, int receiveBufferSize) throws IOException {

if (this.channels.containsKey(id))

throw new IllegalStateException("There is already a connection for id " id);

//获取到SocketChannel

SocketChannel socketChannel = SocketChannel.open();

//设置为非阻塞的模式

socketChannel.configureBlocking(false);

Socket socket = socketChannel.socket();

socket.setKeepAlive(true);

//设置网络参数,如发送和接收的buffer大小

if (sendBufferSize != Selectable.USE_DEFAULT_BUFFER_SIZE)

socket.setSendBufferSize(sendBufferSize);

if (receiveBufferSize != Selectable.USE_DEFAULT_BUFFER_SIZE)

socket.setReceiveBufferSize(receiveBufferSize);

//这个的默认值是false,代表要开启Nagle的算法

//它会把网络中的一些小的数据包收集起来,组合成一个大的数据包,再进行发送

//因为它认为如果网络中有大量的小的数据包在传输则会影响传输效率

socket.setTcpNoDelay(true);

boolean connected;

try {

//尝试去服务器去连接,因为这儿非阻塞的

//有可能就立马连接成功,如果成功了就返回true

//也有可能需要很久才能连接成功,返回false。

connected = socketChannel.connect(address);

} catch (UnresolvedAddressException e) {

socketChannel.close();

throw new IOException("Can't resolve address: " address, e);

} catch (IOException e) {

socketChannel.close();

throw e;

}

//SocketChannel往Selector上注册了一个OP_CONNECT

SelectionKey key = socketChannel.register(nioSelector, SelectionKey.OP_CONNECT);

//根据SocketChannel封装出一个KafkaChannel

KafkaChannel channel = channelBuilder.buildChannel(id, key, maxReceiveSize);

//把key和KafkaChannel关联起来

//我们可以根据key就找到KafkaChannel

//也可以根据KafkaChannel找到key

key.attach(channel);

//缓存起来

this.channels.put(id, channel);

//如果连接上了

if (connected) {

// OP_CONNECT won't trigger for immediately connected channels

log.debug("Immediately connected to node {}", channel.id());

immediatelyConnectedKeys.add(key);

// 取消前面注册 OP_CONNECT 事件。

key.interestOps(0);

}

}

2. 注册register()

/**

* Register the nioSelector with an existing channel

* Use this on server-side, when a connection is accepted by a different thread but processed by the Selector

* Note that we are not checking if the connection id is valid - since the connection already exists

*/

public void register(String id, SocketChannel socketChannel) throws ClosedChannelException {

//往自己的Selector上面注册OP_READ事件

//这样的话,Processor线程就可以读取客户端发送过来的连接。

SelectionKey key = socketChannel.register(nioSelector, SelectionKey.OP_READ);

//kafka里面对SocketChannel封装了一个KakaChannel

KafkaChannel channel = channelBuilder.buildChannel(id, key, maxReceiveSize);

//key和channel

key.attach(channel);

//所以我们服务端这儿代码跟我们客户端的网络部分的代码是复用的

//channels里面维护了多个网络连接。

this.channels.put(id, channel);

}

3. 发送send()

/**

* Queue the given request for sending in the subsequent {@link #poll(long)} calls

* @param send The request to send

*/

public void send(Send send) {

//获取到一个KafakChannel

KafkaChannel channel = channelOrFail(send.destination());

try {

//重要方法

channel.setSend(send);

} catch (CancelledKeyException e) {

this.failedSends.add(send.destination());

close(channel);

}

}

4. 消息处理poll()

@Override

public void poll(long timeout) throws IOException {

if (timeout < 0)

throw new IllegalArgumentException("timeout should be >= 0");

//将上一次poll()方法返回的结果清空

clear();

if (hasStagedReceives() || !immediatelyConnectedKeys.isEmpty())

timeout = 0;

/* check ready keys */

long startSelect = time.nanoseconds();

//从Selector上找到有多少个key注册了,等待I/O事件发生

int readyKeys = select(timeout);

long endSelect = time.nanoseconds();

this.sensors.selectTime.record(endSelect - startSelect, time.milliseconds());

//上面刚刚确实是注册了一个key

if (readyKeys > 0 || !immediatelyConnectedKeys.isEmpty()) {

//处理I/O事件,对这个Selector上面的key要进行处理

pollSelectionKeys(this.nioSelector.selectedKeys(), false, endSelect);

pollSelectionKeys(immediatelyConnectedKeys, true, endSelect);

}

// 对stagedReceives里面的数据要进行处理

addToCompletedReceives();

long endIo = time.nanoseconds();

this.sensors.ioTime.record(endIo - endSelect, time.milliseconds());

// we use the time at the end of select to ensure that we don't close any connections that

// have just been processed in pollSelectionKeys

//完成处理后,关闭长链接

maybeCloseOldestConnection(endSelect);

}

5. 处理Selector上面的key

//用来处理OP_CONNECT,OP_READ,OP_WRITE事件,同时负责检测连接状态

private void pollSelectionKeys(Iterable<SelectionKey> selectionKeys,

boolean isImmediatelyConnected,

long currentTimeNanos) {

//获取到所有key

Iterator<SelectionKey> iterator = selectionKeys.iterator();

//遍历所有的key

while (iterator.hasNext()) {

SelectionKey key = iterator.next();

iterator.remove();

//根据key找到对应的KafkaChannel

KafkaChannel channel = channel(key);

// register all per-connection metrics at once

sensors.maybeRegisterConnectionMetrics(channel.id());

if (idleExpiryManager != null)

idleExpiryManager.update(channel.id(), currentTimeNanos);

try {

/* complete any connections that have finished their handshake (either normally or immediately) */

//处理完成连接和OP_CONNECT的事件

if (isImmediatelyConnected || key.isConnectable()) {

//完成网络的连接。

if (channel.finishConnect()) {

//网络连接已经完成了以后,就把这个channel添加到已连接的集合中

this.connected.add(channel.id());

this.sensors.connectionCreated.record();

SocketChannel socketChannel = (SocketChannel) key.channel();

log.debug("Created socket with SO_RCVBUF = {}, SO_SNDBUF = {}, SO_TIMEOUT = {} to node {}",

socketChannel.socket().getReceiveBufferSize(),

socketChannel.socket().getSendBufferSize(),

socketChannel.socket().getSoTimeout(),

channel.id());

} else

continue;

}

/* if channel is not ready finish prepare */

//身份认证

if (channel.isConnected() && !channel.ready())

channel.prepare();

/* if channel is ready read from any connections that have readable data */

if (channel.ready() && key.isReadable() && !hasStagedReceive(channel)) {

NetworkReceive networkReceive;

//处理OP_READ事件,接受服务端发送回来的响应(请求)

//networkReceive 代表的就是一个服务端发送回来的响应

while ((networkReceive = channel.read()) != null)

addToStagedReceives(channel, networkReceive);

}

/* if channel is ready write to any sockets that have space in their buffer and for which we have data */

//处理OP_WRITE事件

if (channel.ready() && key.isWritable()) {

//获取到要发送的那个网络请求,往服务端发送数据

//如果消息被发送出去了,就会移除OP_WRITE

Send send = channel.write();

//已经完成响应消息的发送

if (send != null) {

this.completedSends.add(send);

this.sensors.recordBytesSent(channel.id(), send.size());

}

}

/* cancel any defunct sockets */

if (!key.isValid()) {

close(channel);

this.disconnected.add(channel.id());

}

} catch (Exception e) {

String desc = channel.socketDescription();

if (e instanceof IOException)

log.debug("Connection with {} disconnected", desc, e);

else

log.warn("Unexpected error from {}; closing connection", desc, e);

close(channel);

//添加到连接失败的集合中

this.disconnected.add(channel.id());

}

}

}

2.2 MetadataUpdater

是NetworkClient用于请求更新集群元数据信息并检索集群节点的接口,是一个非线程安全的内部类,有两个实现类,分别是DefaultMetadataUpdater和ManualMetadataUpdater,NetworkClient用到的是DefaultMetadataUpdater类,是NetworkClient的默认实现类,同时是NetworkClient的内部类,从源码可以看到,如下。

if (metadataUpdater == null) {

if (metadata == null)

throw new IllegalArgumentException("`metadata` must not be null");

this.metadataUpdater = new DefaultMetadataUpdater(metadata);

} else {

this.metadataUpdater = metadataUpdater;

}

......

class DefaultMetadataUpdater implements MetadataUpdater

{

/* the current cluster metadata */

//集群元数据对象

private final Metadata metadata;

/* true if there is a metadata request that has been sent and for which we have not yet received a response */

//用来标识是否已经发送过MetadataRequest,若是已发送,则无需重复发送

private boolean metadataFetchInProgress;

/* the last timestamp when no broker node is available to connect */

//记录没有发现可用节点的时间戳

private long lastNoNodeAvailableMs;

DefaultMetadataUpdater(Metadata metadata) {

this.metadata = metadata;

this.metadataFetchInProgress = false;

this.lastNoNodeAvailableMs = 0;

}

//返回集群节点集合

@Override

public List<Node> fetchNodes() {

return metadata.fetch().nodes();

}

@Override

public boolean isUpdateDue(long now) {

return !this.metadataFetchInProgress && this.metadata.timeToNextUpdate(now) == 0;

}

//核心方法,判断当前集群保存的元数据是否需要更新,如果需要更新则发送MetadataRequest请求

@Override

public long maybeUpdate(long now) {

// should we update our metadata?

//获取下次更新元数据的时间戳

long timeToNextMetadataUpdate = metadata.timeToNextUpdate(now);

//获取下次重试连接服务端的时间戳

long timeToNextReconnectAttempt = Math.max(this.lastNoNodeAvailableMs metadata.refreshBackoff() - now, 0);

//检测是否已经发送过MetadataRequest请求

long waitForMetadataFetch = this.metadataFetchInProgress ? Integer.MAX_VALUE : 0;

// if there is no node available to connect, back off refreshing metadata

long metadataTimeout = Math.max(Math.max(timeToNextMetadataUpdate, timeToNextReconnectAttempt),

waitForMetadataFetch);

if (metadataTimeout == 0) {

// Beware that the behavior of this method and the computation of timeouts for poll() are

// highly dependent on the behavior of leastLoadedNode.

//找到负载最小的节点

Node node = leastLoadedNode(now);

// 创建MetadataRequest请求,待触发poll()方法执行真正的发送操作。

maybeUpdate(now, node);

}

return metadataTimeout;

}

//处理没有建立好连接的请求

@Override

public boolean maybeHandleDisconnection(ClientRequest request) {

ApiKeys requestKey = ApiKeys.forId(request.request().header().apiKey());

if (requestKey == ApiKeys.METADATA && request.isInitiatedByNetworkClient()) {

Cluster cluster = metadata.fetch();

if (cluster.isBootstrapConfigured()) {

int nodeId = Integer.parseInt(request.request().destination());

Node node = cluster.nodeById(nodeId);

if (node != null)

log.warn("Bootstrap broker {}:{} disconnected", node.host(), node.port());

}

metadataFetchInProgress = false;

return true;

}

return false;

}

//解析响应信息

@Override

public boolean maybeHandleCompletedReceive(ClientRequest req, long now, Struct body) {

short apiKey = req.request().header().apiKey();

//检查是否为MetadataRequest请求

if (apiKey == ApiKeys.METADATA.id && req.isInitiatedByNetworkClient()) {

// 处理响应

handleResponse(req.request().header(), body, now);

return true;

}

return false;

}

@Override

public void requestUpdate() {

this.metadata.requestUpdate();

}

//处理MetadataRequest请求响应

private void handleResponse(RequestHeader header, Struct body, long now) {

this.metadataFetchInProgress = false;

//因为服务端发送回来的是一个二进制的数据结构

//所以生产者这儿要对这个数据结构要进行解析

//解析完了以后就封装成一个MetadataResponse对象。

MetadataResponse response = new MetadataResponse(body);

//响应里面会带回来元数据的信息

//获取到了从服务端拉取的集群的元数据信息。

Cluster cluster = response.cluster();

// check if any topics metadata failed to get updated

Map<String, Errors> errors = response.errors();

if (!errors.isEmpty())

log.warn("Error while fetching metadata with correlation id {} : {}", header.correlationId(), errors);

// don't update the cluster if there are no valid nodes...the topic we want may still be in the process of being

// created which means we will get errors and no nodes until it exists

//如果正常获取到了元数据的信息

if (cluster.nodes().size() > 0) {

//更新元数据信息。

this.metadata.update(cluster, now);

} else {

log.trace("Ignoring empty metadata response with correlation id {}.", header.correlationId());

this.metadata.failedUpdate(now);

}

}

/**

* Create a metadata request for the given topics

*/

private ClientRequest request(long now, String node, MetadataRequest metadata) {

RequestSend send = new RequestSend(node, nextRequestHeader(ApiKeys.METADATA), metadata.toStruct());

return new ClientRequest(now, true, send, null, true);

}

/**

* Add a metadata request to the list of sends if we can make one

*/

private void maybeUpdate(long now, Node node) {

//检测node是否可用

if (node == null) {

log.debug("Give up sending metadata request since no node is available");

// mark the timestamp for no node available to connect

this.lastNoNodeAvailableMs = now;

return;

}

String nodeConnectionId = node.idString();

//判断网络连接是否应建立好,是否可用向该节点发送请求

if (canSendRequest(nodeConnectionId)) {

this.metadataFetchInProgress = true;

MetadataRequest metadataRequest;

//指定需要更新元数据的topic

if (metadata.needMetadataForAllTopics())

//封装请求(获取所有topics)的元数据信息的请求

metadataRequest = MetadataRequest.allTopics();

else

//我们默认走的这儿的这个方法

//就是拉取我们发送消息的对应的topic的方法

metadataRequest = new MetadataRequest(new ArrayList<>(metadata.topics()));

//这儿就给我们创建了一个请求(拉取元数据的)

ClientRequest clientRequest = request(now, nodeConnectionId, metadataRequest);

log.debug("Sending metadata request {} to node {}", metadataRequest, node.id());

//缓存请求,待下次触发poll()方法执行发送操作

doSend(clientRequest, now);

} else if (connectionStates.canConnect(nodeConnectionId, now)) {

// we don't have a connection to this node right now, make one

log.debug("Initialize connection to node {} for sending metadata request", node.id());

//初始化连接

initiateConnect(node, now);

// If initiateConnect failed immediately, this node will be put into blackout and we

// should allow immediately retrying in case there is another candidate node. If it

// is still connecting, the worst case is that we end up setting a longer timeout

// on the next round and then wait for the response.

} else { // connected, but can't send more OR connecting

// In either case, we just need to wait for a network event to let us know the selected

// connection might be usable again.

this.lastNoNodeAvailableMs = now;

}

}

}

将ClientRequest请求缓存到InFlightRequest缓存队列中。

private void doSend(ClientRequest request, long now) {

request.setSendTimeMs(now);

//这儿往inFlightRequests缓存队列里面还没有收到响应的请求,默认最多能存5个请求

this.inFlightRequests.add(request);

//然后不断调用Selector的send()方法

selector.send(request.request());

}

2.3 InFlightRequests

这个类是一个请求队列,用于缓存已经发送出去但是没有收到响应的ClientRequest,提供了许多管理缓存队列的方法,支持通过配置参数控制ClientRequest的数量,通过源码可以看到其底层数据结构Map<String, Deque<ClientRequest>>。

/**

* The set of requests which have been sent or are being sent but haven't yet received a response

*/

final class InFlightRequests {

private final int maxInFlightRequestsPerConnection;

private final Map<String, Deque<ClientRequest>> requests = new HashMap<>();

public InFlightRequests(int maxInFlightRequestsPerConnection) {

this.maxInFlightRequestsPerConnection = maxInFlightRequestsPerConnection;

}

......

}

除了包含了很多关于处理队列的方法之外,有一个比较重要的方法着重看一下canSendMore()。

/**

* Can we send more requests to this node?

*

* @param node Node in question

* @return true iff we have no requests still being sent to the given node

*/

public boolean canSendMore(String node) {

//获得要发送到节点的ClientRequest队列

Deque<ClientRequest> queue = requests.get(node);

//如果节点出现请求堆积,未及时处理,则有可能出现请求超时的情况

return queue == null || queue.isEmpty() ||

(queue.peekFirst().request().completed()

&& queue.size() < this.maxInFlightRequestsPerConnection);

}

了解完上面几个核心类之后,我们开始剖析NetworkClient的流程和实现。

2.4 NetworkClient

Kafka中所有的消息都都需要借助NetworkClient与上下游建立发送通道,其重要性不言而喻。这里我们只考虑消息成功的流程,异常处理不做解析,相对而言没那么重要,消息发送的流程大致如下:

1. 首先调用ready()方法,判断节点是否具备发送消息的条件

2. 通过isReady()方法判断是否可以往节点发送更多请求,用来检查是否有请求堆积

3. 使用initiateConnect初始化连接

4. 然后调用selector的connect()方法建立连接

5. 获取SocketChannel,与服务端建立连接

6. SocketChannel往Selector注册OP_CONNECT事件

7. 调用send()方式发送请求

8. 调用poll()方法处理请求

下面就根据消息发送流程涉及的核心方法进行剖析,了解每个流程中涉及的主要操作。

1. 检查节点是否满足消息发送条件

/**

* Begin connecting to the given node, return true if we are already connected and ready to send to that node.

*

* @param node The node to check

* @param now The current timestamp

* @return True if we are ready to send to the given node

*/

@Override

public boolean ready(Node node, long now) {

if (node.isEmpty())

throw new IllegalArgumentException("Cannot connect to empty node " node);

//判断要发送消息的主机,是否具备发送消息的条件

if (isReady(node, now))

return true;

//判断是否可以尝试去建立网络

if (connectionStates.canConnect(node.idString(), now))

// if we are interested in sending to a node and we don't have a connection to it, initiate one

//初始化连接

//绑定了 连接到事件而已

initiateConnect(node, now);

return false;

}

2. 初始化连接

/**

* Initiate a connection to the given node

*/

private void initiateConnect(Node node, long now) {

String nodeConnectionId = node.idString();

try {

log.debug("Initiating connection to node {} at {}:{}.", node.id(), node.host(), node.port());

this.connectionStates.connecting(nodeConnectionId, now);

//开始建立连接

selector.connect(nodeConnectionId,

new InetSocketAddress(node.host(), node.port()),

this.socketSendBuffer,

this.socketReceiveBuffer);

} catch (IOException e) {

/* attempt failed, we'll try again after the backoff */

connectionStates.disconnected(nodeConnectionId, now);

/* maybe the problem is our metadata, update it */

metadataUpdater.requestUpdate();

log.debug("Error connecting to node {} at {}:{}:", node.id(), node.host(), node.port(), e);

}

}

3. initiateConnect()方法中调用的connect()方法就是Selectable实现类Selector的connect()方法,包括获取SocketChannel并注册OP_CONNECT、OP_READ、 OP_WRITE事件上面已经分析了,这里不做赘述,完成以上一系列建立网络连接的动作之后将消息请求发送到下游节点,Sender的send()方法会调用NetworkClient的send()方法进行发送,而NetworkClient的send()方法最终调用了Selector的send()方法。

/**

* Queue up the given request for sending. Requests can only be sent out to ready nodes.

*

* @param request The request

* @param now The current timestamp

*/

@Override

public void send(ClientRequest request, long now) {

String nodeId = request.request().destination();

//判断已经建立连接状态的节点能否接收更多请求

if (!canSendRequest(nodeId))

throw new IllegalStateException("Attempt to send a request to node " nodeId " which is not ready.");

//发送ClientRequest

doSend(request, now);

}

private void doSend(ClientRequest request, long now) {

request.setSendTimeMs(now);

//缓存请求

this.inFlightRequests.add(request);

selector.send(request.request());

}

4. 最后调用poll()方法处理请求

/**

* Do actual reads and writes to sockets.

*

* @param timeout The maximum amount of time to wait (in ms) for responses if there are none immediately,

* must be non-negative. The actual timeout will be the minimum of timeout, request timeout and

* metadata timeout

* @param now The current time in milliseconds

* @return The list of responses received

*/

@Override

public List<ClientResponse> poll(long timeout, long now) {

//步骤一:请求更新元数据

long metadataTimeout = metadataUpdater.maybeUpdate(now);

try {

//步骤二:执行I/O操作,发送请求

this.selector.poll(Utils.min(timeout, metadataTimeout, requestTimeoutMs));

} catch (IOException e) {

log.error("Unexpected error during I/O", e);

}

// process completed actions

long updatedNow = this.time.milliseconds();

List<ClientResponse> responses = new ArrayList<>();

//步骤三:处理各种响应

handleCompletedSends(responses, updatedNow);

handleCompletedReceives(responses, updatedNow);

handleDisconnections(responses, updatedNow);

handleConnections();

handleTimedOutRequests(responses, updatedNow);

// invoke callbacks

//循环调用ClientRequest的callback回调函数

for (ClientResponse response : responses) {

if (response.request().hasCallback()) {

try {

response.request().callback().onComplete(response);

} catch (Exception e) {

log.error("Uncaught error in request completion:", e);

}

}

}

return responses;

}

有兴趣的童鞋可以继续深究回调函数的逻辑和Selector的操作。

补充说明:

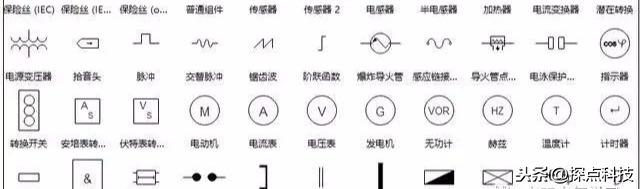

上面有一个点没有涉及到,就是kafka的内存池,可以去看一下BufferPool这个类,这一块知识点应该是要在上一篇文章说的,突然想起来漏掉了,在这里做一下补充,对应就是我们前面说到的RecordAccumulator这个类的数据结构,封装好RecordAccumulator对象是有多个Dqueue组成,每个Dqueue由多个RecordBatch组成,除此之外,RecordAccumulator还包括了BufferPool内存池,这里再稍微回忆一下,RecordAccumulator类初始化了ConcurrentMap<TopicPartition, Deque<RecordBatch>> 这样的数据结构以及实例化了BufferPool,也就是内存池,为了方便理解,这里画一下内存池的内部构造图。

public final class RecordAccumulator {

......

private final BufferPool free;

......

private final ConcurrentMap<TopicPartition, Deque<RecordBatch>> batches;

}

如图所示,我们重点关于内存的分配allocate()和释放deallocate()这个两个方法,有兴趣的小伙伴可以私底下看一下,这个类的代码总共也就三百多行,内容不是很多,欢迎一起交流学习,这里就不做展开了,免得影响本文的主题。

3. 小结

本文主要是剖析Kafka生产者发送消息的真正执行者Sender线程以及作为消息上下游的传输通道NetworkClient组件,主要涉及到NIO的应用,同时介绍了发送消息主要涉及的核心依赖类。写这篇文章主要是起到一个承上启下的作用,既是对前面分析Kafka生产者发送消息的补充,同时也为接下来剖析消费者消费上游消息作铺垫,写得有点不成体系,写文章的思路主要考虑用总分的思路作为线索去分析,个人觉得篇幅过长不方便阅读,所以会尽量精简,重点分析核心方法和流程,希望对读者有所帮助。

免责声明:本文仅代表文章作者的个人观点,与本站无关。其原创性、真实性以及文中陈述文字和内容未经本站证实,对本文以及其中全部或者部分内容文字的真实性、完整性和原创性本站不作任何保证或承诺,请读者仅作参考,并自行核实相关内容。文章投诉邮箱:anhduc.ph@yahoo.com